MA Emilio Schmidt

Introduction

This master's thesis investigates the dynamics and stability of filamentary gas structures using numerical simulations. Such filaments are of particular physical interest because they are commonly observed in star-forming regions and are closely connected to the fragmentation of molecular clouds and the formation of stars.

As a first reference case, this work considers the classical isothermal, self-gravitating gas cylinder introduced by Ostriker (1967). This hydrodynamic model provides an analytical equilibrium solution and is therefore well suited for testing the numerical treatment of self-gravity, boundary conditions and gravitational fragmentation.

Building on this hydrodynamic reference case, the long-term goal is to extend the analysis to magnetohydrodynamic filament models. As a theoretical reference for this extension, this work builds on the magnetohydrodynamic equilibrium models of Fiege and Pudritz Fiege and Pudritz (2000a). They solved the stationary magnetohydrodynamic equations for cylindrical, self-gravitating filaments with helical magnetic fields and obtained equilibrium solutions for different magnetic configurations. The linear stability of these equilibria was then studied by Fiege and Pudritz Fiege and Pudritz (2000b).

The aim of this work is to use the equilibrium models described by Fiege and Pudritz (2000a) as a theoretical reference and guideline for the numerical investigation of filamentary gas structures. The resulting simulations are then interpreted in the context of the linear stability analysis by Fiege and Pudritz (2000b).

Theoretical Framework

The isothermal self-gravitating cylinder

Derivation of the equilibrium density profile

Sought is the stationary density profile \(\rho(r)\) of an infinitely long, isothermal, self-gravitating gas cylinder. This configuration corresponds to the classical equilibrium solution of an isothermal cylinder derived by Ostriker (1967). To do this, the hydrostatic Euler equation has to be solved, which is given by:

\begin{align} \boldsymbol{\nabla} P = \boldsymbol{f}_\text{ext} \end{align}

Here, \(P\) is the pressure field and \(\boldsymbol{f}_\text{ext}\) is the force density acting on the gas. In the considered case, this is the gas's own gravity. Since the gravitational field is a conservative force field, it can be expressed by a potential \(\phi_\text{ext}\):

\begin{align} \boldsymbol{f}_\text{ext} = - \rho \boldsymbol{\nabla} \phi_\text{ext} \end{align}

Inserting yields:

\begin{align} \boldsymbol{\nabla} P = - \rho \boldsymbol{\nabla} \phi_\text{ext} \end{align}

The pressure field of the gas under consideration can be described by the equation of state of an isothermal gas, which is given by:

\begin{align} P = \frac{R T}{M} \rho \end{align}

Here, \(R\) denotes the universal gas constant, \(T\) the spatially homogeneous temperature distribution, and \(M\) the molar mass of the gas. Inserting into the hydrostatic Euler equation yields:

\begin{align} \phantom{\Rightarrow}\;& \frac{R T}{M} \boldsymbol{\nabla} \rho = - \rho \boldsymbol{\nabla} \phi_\text{ext} \\[6pt] \Leftrightarrow\;& \frac{1}{\rho} \boldsymbol{\nabla} \rho = - \frac{M}{R T} \boldsymbol{\nabla} \phi_\text{ext} \\[6pt] \Leftrightarrow\;& \boldsymbol{\nabla} \ln(\rho) = - \frac{M}{R T} \boldsymbol{\nabla} \phi_\text{ext} \\[6pt] \Rightarrow\;& \Delta \ln(\rho) = - \frac{M}{R T} \Delta \phi_\text{ext} \end{align}

It proves useful to use the Poisson equation of Newtonian gravity, which is given by:

\begin{align} \Delta \phi_\text{ext} = 4 \pi G \rho \end{align}

Inserting yields:

\begin{align} \phantom{\Rightarrow}\;& \Delta \ln(\rho) = - \frac{4 \pi G M}{R T} \rho \, , \quad \text{def.:} \, a := \frac{4 \pi G M}{R T} \\[6pt] \Leftrightarrow\;& \Delta \ln(\rho) = - a \rho \end{align}

For an infinitely long, straight, self-gravitating gas cylinder without an externally imposed azimuthal or axial structure, there is symmetry with respect to rotation about the cylinder axis and translation along the axis. The underlying equations share this symmetry, which is why corresponding derivatives disappear in the considered equation:

\begin{align} \phantom{\Rightarrow}\;& \frac{1}{r} \partial_r \big(r \partial_r \ln(\rho)\big) = - a \rho \\[6pt] \Leftrightarrow\;& \partial_r \bigg(r \frac{\partial_r \rho}{\rho}\bigg) = - a r \rho \\[6pt] \Leftrightarrow\;& \frac{\partial_r \rho}{\rho} - r \bigg(\frac{\partial_r \rho}{\rho}\bigg)^2 + r \frac{\partial_r^2 \rho}{\rho} = - a r \rho \end{align}

Since the density is positive by definition, the following substitution can be made without restriction:

\begin{align} u(r) = \ln\left(\frac{\rho(r)}{\rho_0}\right). \end{align}

Here, \(\rho_0 = \text{const.} > 0\) is a constant reference density. Its specific value is irrelevant for further consideration. It only serves to ensure that the argument of the logarithm is dimensionless. The first two derivatives of the new quantity \(u\) are then given by:

\begin{align} \partial_r u = \frac{\partial_r \rho}{\rho} \quad \text{and} \quad \partial_r^2 u = \frac{\partial_r^2 \rho}{\rho} - \left(\frac{\partial_r \rho}{\rho}\right)^2 \end{align}

Inserting this into the differential equation under consideration yields:

\begin{align} \frac{\partial_r u}{r} + \partial_r^2 u = - a \rho_0 \exp(u) \end{align}

To simplify this equation further, another substitution is performed:

\begin{align} z = u + \sqrt{2} t \, , \quad \text{with} \, t = \sqrt{2} \ln\left(\sqrt{a \rho_0} r\right) \end{align}

With \(\partial_r t = \frac{\sqrt{2}}{r}\), the following applies for the first two derivatives of \(u\) with respect to \(r\):

\begin{align} \partial_r u = \frac{\sqrt{2}}{r} \partial_t u \quad \text{and} \quad \partial_r^2 u = - \frac{\sqrt{2}}{r^2} \partial_t u + \frac{2}{r^2} \partial_t^2 u \end{align}

Inserting yields:

\begin{align} \phantom{\Rightarrow}\;& 2 \partial_t^2 u = - a \rho_0 r^2 \exp(u) \\[6pt] \Leftrightarrow\;& 2 \partial_t^2 u = - \exp(z) \end{align}

Furthermore, the following applies:

\begin{align} \phantom{\Rightarrow}\;& \partial_t^2 z = \partial_t^2 u + \sqrt{2} \partial_t^2 t \\[6pt] \Leftrightarrow\;& \partial_t^2 z = \partial_t^2 u \end{align}

Thus, it follows that:

\begin{align} \phantom{\Rightarrow}\;& 2 \partial_t^2 z = - \exp(z) \\[6pt] \Rightarrow\;& 2 \left(\partial_t z\right) \left(\partial_t^2 z\right) = - \left(\partial_t z\right) \exp(z) \\[6pt] \Leftrightarrow\;& \partial_t\left(\left(\partial_t z\right)^2 + \exp(z)\right) = 0 \\[6pt] \Rightarrow\;& \left(\partial_t z\right)^2 + \exp(z) = c_1 \, , \quad c_1 \in \mathbb{R} \\[6pt] \Leftrightarrow\;& \partial_t z = \pm \sqrt{c_1 - \exp(z)} \end{align}

At first, it might seem that both signs need to be treated separately. However, it can actually be shown that both equations lead to the same solutions[1]. In the following, therefore, only the equation with a positive sign will be considered. To solve this, the following substitution is used:

\begin{align} z = \ln(y) \end{align}

This results in the following for the differential equation under consideration:

\begin{align} \frac{\partial_t y}{y} = \sqrt{c_1 - y} \end{align}

Separation of variables yields:

\begin{align} \phantom{\Rightarrow}\;& \frac{\text{d}y}{y\sqrt{c_1 - y}} = \text{d}t \\[6pt] \Rightarrow\;& \frac{1}{\sqrt{c_1}} \ln\left(\bigg|\frac{\sqrt{c_1 - y} - \sqrt{c_1}}{\sqrt{c_1 - y} + \sqrt{c_1}}\bigg|\right) = t + C_2 \\[6pt] \Leftrightarrow\;& \bigg|\frac{\sqrt{c_1 - y} - \sqrt{c_1}}{\sqrt{c_1 - y} + \sqrt{c_1}}\bigg| = \exp\left(\sqrt{c_1} t + \sqrt{c_1} C_2\right) \, , \quad \text{with} \, c_2 = \exp\left(\sqrt{c_1} C_2\right) \\[6pt] \Leftrightarrow\;& \bigg|\frac{\sqrt{c_1 - y} - \sqrt{c_1}}{\sqrt{c_1 - y} + \sqrt{c_1}}\bigg| = c_2 \exp\left(\sqrt{c_1} t\right) \, , \quad \text{with} \, A = c_2 \exp\left(\sqrt{c_1} t\right) \\[6pt] \Leftrightarrow\;& \bigg|\frac{\sqrt{c_1 - y} - \sqrt{c_1}}{\sqrt{c_1 - y} + \sqrt{c_1}}\bigg| = A \, , \quad \text{with} \, y = \exp(z) \Rightarrow \sqrt{c_1 - y} > \sqrt{c_1} \; \forall y \\[6pt] \Leftrightarrow\;& \frac{\sqrt{c_1} - \sqrt{c_1 - y}}{\sqrt{c_1} + \sqrt{c_1 - y}} = A \\[6pt] \Leftrightarrow\;& y = 4 c_1 \frac{A}{(1 + A)^2} \\[6pt] \Leftrightarrow\;& \exp(u) = \frac{4 c_1}{a \rho_0 r^2} \frac{A}{(1 + A)^2} \\[6pt] \Leftrightarrow\;& \rho = \frac{4 c_1 \rho_0}{a \rho_0 r^2} \frac{A}{(1 + A)^2} \end{align}

The quantity \(A\) can be written as follows:

\begin{align} \phantom{\Rightarrow}\;& A = c_2 \exp\left(\sqrt{c_1} t\right) \\[6pt] \Leftrightarrow\;& A = c_2 \exp\left(\sqrt{2 c_1} \ln(\sqrt{a \rho_0} r)\right) \\[6pt] \Leftrightarrow\;& A = c_2 (\sqrt{a \rho_0} r)^{\sqrt{2 c_1}} \end{align}

Define additionally:

\begin{align} b := \sqrt{2 c_1} \quad \text{und} \quad r_0 := \left(c_2^\frac{1}{b} \sqrt{a \rho_0}\right)^{-1} \end{align}

This yields the following general solution for the differential equation under consideration:

\begin{align} \phantom{\Rightarrow}\;& \rho(r) = \frac{2 b^2}{a r^2} \frac{\left(\frac{r}{r_0}\right)^b} {\left(1 + \left(\frac{r}{r_0}\right)^b\right)^2} \\[6pt] \Leftrightarrow\;& \rho(r) = \frac{2 b^2}{a r_0^2} \frac{\left(\frac{r}{r_0}\right)^{b-2}} {\left(1 + \left(\frac{r}{r_0}\right)^b\right)^2} \end{align}

- ↑ Switching sign just flips the sign of \(b\), and the final \(\rho\) is invariant wrt \(b\to -b\).

Boundary conditions

The integration constants \(r_0\) and \(b\) must then be determined using appropriate boundary conditions. For the problem considered, it makes sense to specify boundary conditions for the center of the cylinder, i.e., for \(r = 0\). In the considered case, the density at the center of the cylinder must be finite and nonzero, since both a vanishing and a diverging density at the origin would lead to unphysical behavior:

\begin{align} \rho(r=0) = \rho_c \, , \quad \text{with} \quad 0 < \rho_c < \infty \end{align}

Furthermore, rotational symmetry and the requirement for a smooth, regular solution imply that the density profile at the origin should not have a gradient. Consequently, \(\rho(r)\) has an extremum at \(r=0\), and the following applies:

\begin{align} \partial_r \rho(r) \big|_{r = 0} = 0 \end{align}

It should be noted that the substitution \(t=\sqrt{2}\ln\big(\sqrt{a\rho_0}r\big)\) is only defined for \(r>0\). The differential equation was thus initially solved in the domain \(r \in \mathbb{R}\). In order to formulate boundary conditions at \(r=0\), the solution must be extended continuously to \(r=0\). Accordingly, the central values are understood as right-sided limits:

\begin{align} \rho(r = 0) = \lim\limits_{r \rightarrow 0} \rho(r) \quad \text{and} \quad \partial_r \rho(r) \big|_{r = 0} = \lim\limits_{r \rightarrow 0} \partial_r \rho(r) \end{align}

With the first boundary condition, the following then applies:

\begin{align} \phantom{\Rightarrow}\;& \lim\limits_{r \rightarrow 0} \rho(r) = \rho_c \\[6pt] \Leftrightarrow\;& \lim\limits_{r \rightarrow 0} \frac{2 b^2}{a r_0^2} \frac{\left(\frac{r}{r_0}\right)^{b-2}} {\left(1 + \left(\frac{r}{r_0}\right)^b\right)^2} = \rho_c \end{align}

Since the denominator converges to \(1\) in the limit case \(r\rightarrow 0\), the local behavior of the density in the immediate vicinity of the origin is determined exclusively by the numerator. This, together with the fact that the density at the origin must not vanish or diverge, directly implies that the following must hold:

\begin{align} b = 2 \end{align}

Inserting into the solution for the density profile provides:

\begin{align} \rho(r) = \frac{8}{a r_0^2} \frac{1} {\left(1 + \left(\frac{r}{r_0}\right)^2\right)^2} \end{align}

Furthermore, the first boundary condition implies that:

\begin{align} \phantom{\Rightarrow}\;& \rho_c = \frac{8}{a r_0^2} \\[6pt] \Leftrightarrow\;& r_0^2 = \frac{8}{a \rho_c} \end{align}

Inserting this gives the stationary density profile of an infinitely long, isothermal, self-gravitating gas cylinder:

\begin{align} \rho(r) = \frac{\rho_c}{\left(1 + \frac{1}{8} a \rho_c r^2\right)^2} \end{align}

It is noticeable that the second boundary condition is not explicitly included in the determination of the integration constants \(r_0\) and \(b\). The reason for this is that the requirement for a finite central density different from zero already restricts the solution so much that only the case \(b=2\) remains. Thus, the density profile takes the above form and automatically satisfies the second boundary condition. It is therefore not redundant, but is already implicitly contained in the regularity and symmetry requirements.

In this context, the total mass per unit length \(\lambda_{\text{total}}\) of an infinitely long, isothermal gas cylinder held together by its own gravity can be introduced:

\begin{align} \phantom{\Rightarrow}\;& \lambda_{\text{total}} = 2 \pi \int_{0}^{\infty} \rho(r) r \text{d} r \\[6pt] \Leftrightarrow\;& \lambda_{\text{total}} = \frac{8 \pi}{a} \\[6pt] \Leftrightarrow\;& \lambda_{\text{total}} = \frac{2 R T}{G M} \end{align}

The solution for the density profile can therefore also be written as follows:

\begin{align} \rho(r) = \frac{\lambda_{\text{total}}}{\pi r_0^2} \frac{1}{\left(1 + \left(\frac{r}{r_0}\right)^2\right)^2} \end{align}

Gravitational potential of the equilibrium cylinder

With the determined density distribution, the gravitational potential induced by the density distribution can now be determined using Poisson's equation. The Poisson equation, using the given symmetry, is given by:

\begin{align} \phantom{\Rightarrow}\;& \frac{1}{r} \partial_r \left(r \partial_r \phi_\text{ext}(r)\right) = 4 \pi G \rho(r) \\[6pt] \Leftrightarrow\;& \partial_r \left(r \partial_r \phi_\text{ext}(r)\right) = 4 \pi G \rho(r) r \end{align}

Integration yields:

\begin{align} \phantom{\Rightarrow}\;& r \partial_r \phi_\text{ext}(r) = 4 \pi G \int_{0}^{r} \rho(r^\prime) r^\prime \text{d} r \\[6pt] \Leftrightarrow\;& r \partial_r \phi_\text{ext}(r) = \frac{32 \pi G}{a r_0^2} \int_{0}^{r} \frac{r^\prime}{\left(1 + \left(\frac{r^\prime}{r_0}\right)^2\right)^2} \text{d} r \\[6pt] \Leftrightarrow\;& r \partial_r \phi_\text{ext}(r) = \frac{16 \pi G}{a} \frac{\left(\frac{r}{r_0}\right)^2} {1 + \left(\frac{r}{r_0}\right)^2} \\[6pt] \Leftrightarrow\;& \partial_r \phi_\text{ext}(r) = \frac{16 \pi G}{a r_0} \frac{\frac{r}{r_0}}{1 + \left(\frac{r}{r_0}\right)^2} \end{align}

Another integration yields the gravitational potential of an infinitely long, isothermal, self-gravitating gas cylinder:

\begin{align} \phantom{\Rightarrow}\;& \phi_\text{ext}(r) = \frac{16 \pi G}{a r_0} \int_{0}^{r} \frac{\frac{r^\prime}{r_0}}{1 + \left(\frac{r^\prime}{r_0}\right)^2} \text{d} r \\[6pt] \Leftrightarrow\;& \phi_\text{ext}(r) = \frac{8 \pi G}{a} \ln\left(1 + \left(\frac{r}{r_0}\right)^2\right) \\[6pt] \Leftrightarrow\;& \phi_\text{ext}(r) = G \lambda_{\text{total}} \ln\left(1 + \left(\frac{r}{r_0}\right)^2\right) \end{align}

The case with a core

The following modification is introduced mainly for numerical reasons. In the simulations, the computational domain does not include the cylinder axis, but starts at a finite inner radius \(R_{\min}\). This inner boundary can be interpreted as an impenetrable core. The core line mass is chosen such that the gravitational field outside \(R_{\min}\) reproduces the field of the Ostriker cylinder as closely as possible.

It can be shown that this system follows the same differential equation for density distribution as the case without a core. The starting point here is also the hydrostatic Euler equation. For this, the external force density or force \(\text{d} \boldsymbol{F}_{\text{ext}}\) of the gas, including the core, is required on a mass element \(\rho(r) \text{d} V\), which is given by:

\begin{align} \text{d} \boldsymbol{F}_{\text{ext}} = - \frac{2 G \lambda(r) \rho(r) \text{d} V}{r} \boldsymbol{e}_r \, , \quad \text{with} \quad r > R_{\text{min}} \end{align}

Here, \(\lambda(r)\) is the mass per unit length enclosed up to radius \(r\). It is given by:

\begin{align} \phantom{\Rightarrow}\;& \lambda(r) = 2 \pi \int_{0}^{r} \rho(r^\prime) r^\prime \text{d} r^\prime \\[6pt] \Leftrightarrow\;& \lambda(r) = \lambda_{\text{core}} + 2 \pi \int_{R_{\text{min}}}^{r} \rho(r^\prime) r^\prime \text{d} r^\prime \end{align}

Here, \(\lambda_{\text{core}}\) is the mass per unit length of the core. Substituting this into the hydrostatic Euler equation gives:

\begin{align} \phantom{\Rightarrow}\;& \boldsymbol{\nabla} P = - \frac{2 G \rho}{r} \left(\lambda_{\text{core}} + 2 \pi \int_{R_{\text{min}}}^{r} \rho(r^\prime) r^\prime \text{d} r^\prime\right) \boldsymbol{e}_r \\[6pt] \Leftrightarrow\;& \frac{R T}{M} \boldsymbol{\nabla} \rho = - \frac{2 G \rho}{r} \left(\lambda_{\text{core}} + 2 \pi \int_{R_{\text{min}}}^{r} \rho(r^\prime) r^\prime \text{d} r^\prime\right) \boldsymbol{e}_r \end{align}

Due to the given cylinder symmetry, the following then applies:

\begin{align} \phantom{\Rightarrow}\;& \frac{R T}{M} \partial_r \rho = - \frac{2 G \rho}{r} \left(\lambda_{\text{core}} + 2 \pi \int_{R_{\text{min}}}^{r} \rho(r^\prime) r^\prime \text{d} r^\prime\right) \\[6pt] \Leftrightarrow\;& \frac{R T}{2 G M} \frac{r \partial_r \rho}{\rho} = - \left(\lambda_{\text{core}} + 2 \pi \int_{R_{\text{min}}}^{r} \rho(r^\prime) r^\prime \text{d} r^\prime\right) \end{align}

Differentiating with respect to \(r\) then yields:

\begin{align} \phantom{\Rightarrow}\;& \frac{R T}{2 G M} \partial_r \left(\frac{r \partial_r \rho}{\rho}\right) = - 2 \pi \rho r \\[6pt] \Leftrightarrow\;& \frac{\partial_r \rho}{\rho} - r \left(\frac{\partial_r \rho}{\rho}\right)^2 + r \frac{\partial_r^2 \rho}{\rho} = - a r \rho \end{align}

Therefore, the general solution of the system under consideration is given by the general solution of the system without the core:

\begin{align} \phantom{\Rightarrow}\;& \rho(r) = \frac{2 b^2}{a r_0^2} \frac{\left(\frac{r}{r_0}\right)^{b-2}} {\left(1 + \left(\frac{r}{r_0}\right)^b\right)^2} \\[6pt] \Leftrightarrow\;& \rho(r) = \frac{b^2 \lambda_{\text{total}}}{4 \pi r_0^2} \frac{\left(\frac{r}{r_0}\right)^{b-2}} {\left(1 + \left(\frac{r}{r_0}\right)^b\right)^2} \end{align}

A condition for the existence of physically meaningful density profiles can be derived from Euler's hydrostatic equation. Under the assumed symmetry, the following then applies:

\begin{align} \phantom{\Rightarrow}\;& \frac{R T}{M} \text{d}_r \rho = - \frac{2 G \lambda \rho}{r} \\[6pt] \Leftrightarrow\;& \frac{R T}{M} \text{d}_r \ln(\rho) = - \frac{2 G \lambda}{r} \end{align}

Applying the general solution and then performing the derivations yields:

\begin{align} \frac{R T}{M} \left(\frac{b - 2}{r} - \frac{2 b}{r} \frac{\left(\frac{r}{r_0}\right)^b}{1 + \left(\frac{r}{r_0}\right)^b}\right) = - \frac{2 G \lambda}{r} \end{align}

Since this equation holds for all \(r \in \mathbb{R}_{> 0}\), it must be satisfied in particular on the surface of the core. With \(\lambda(r = R_{\text{min}}) = \lambda_{\text{core}}\), the following then follows:

\begin{align} \phantom{\Rightarrow}\;& \frac{R T}{M} \left(\frac{b - 2}{R_{\text{min}}} - \frac{2 b}{R_{\text{min}}} \frac{\left(\frac{R_{\text{min}}}{r_0}\right)^b}{1 + \left(\frac{R_{\text{min}}}{r_0}\right)^b}\right) = - \frac{2 G \lambda_{\text{core}}}{R_{\text{min}}} \\[6pt] \Leftrightarrow\;& \frac{r_0}{R_{\text{min}}} = \left(\frac{b + 2 - 4 \frac{\lambda_{\text{core}}}{\lambda_{\text{total}}}} {b - 2 + 4 \frac{\lambda_{\text{core}}}{\lambda_{\text{total}}}}\right)^{\frac{1}{b}} \end{align}

Since the left side of the equation is non-negative by definition, it follows that for the exponent \(b\), the following must apply:

\begin{align} b > \biggl|4 \frac{\lambda_{\text{core}}}{\lambda_{\text{total}}} - 2\biggr| \end{align}

The gas mass per unit length \(\lambda_{\text{gas}}\) can then be expressed in terms of the mass per unit length of the core and the exponent. The gas mass per unit length is generally given by:

\begin{align} \phantom{\Rightarrow}\;& \lambda_{\text{gas}} = 2 \pi \int_{R_{\text{min}}}^{\infty} \rho(r) r \text{d} r \\[6pt] \Leftrightarrow\;& \lambda_{\text{gas}} = \frac{b^2 \lambda_{\text{total}}}{2 r_0^b} \int_{R_{\text{min}}}^{\infty} \frac{r^{b-1}} {\left(1 + \left(\frac{r}{r_0}\right)^b\right)^2} \text{d} r \\[6pt] \Leftrightarrow\;& \lambda_{\text{gas}} = \frac{b \lambda_{\text{total}}}{2} \frac{1}{1 + \left(\frac{r}{r_0}\right)^b} \end{align}

Using the expression for \(\frac{r_0}{R_{\text{min}}}\) derived above, it follows:

\begin{align} \phantom{\Rightarrow}\;& \lambda_{\text{gas}} = \frac{b + 2}{4} \lambda_{\text{total}} - \lambda_{\text{core}} \\[6pt] \Leftrightarrow\;& \frac{\lambda_{\text{gas}} + \lambda_{\text{core}}}{\lambda_{\text{total}}} = \frac{b + 2}{4} \end{align}

If the core is a numerical necessity, it is only there to emulate the gas within the core radius. Therefore, the case \(b = 2\) continues to be considered for this case. For this case, the core mass per unit length must be selected as follows, for a given core radius:

\begin{align} \phantom{\Rightarrow}\;& \lambda_{\text{core}} = 2 \pi \int_{0}^{R_{\text{min}}} \rho(r,b=2) r \text{d} r \\[6pt] \Leftrightarrow\;& \lambda_{\text{core}} = \frac{2 \lambda_{\text{total}}}{r_0^2} \int_{0}^{R_{\text{min}}} \frac{r}{\left(1 + \left(\frac{r}{r_0}\right)^2\right)^2} \text{d} r \\[6pt] \Leftrightarrow\;& \lambda_{\text{core}} = \frac{R_{\text{min}}^2} {R_{\text{min}}^2 + r_0^2}\lambda_{\text{total}} \end{align}

This results in the following for the gas mass per unit length in the system:

\begin{align} \lambda_{\text{gas}} = \frac{r_0^2} {R_{\text{min}}^2 + r_0^2}\lambda_{\text{total}} \end{align}

Free-fall time of an infinitely long gas cylinder

In the following, the free fall time \(t_{\text{ff}}\) of an infinitely long gas cylinder is calculated for the previously derived density profile, with the cylinder initially at rest. The calculation is performed in the pressureless limit \(P \rightarrow 0\). The dynamics of the system are then determined solely by its own gravity.

To determine the free fall time, an infinitesimal cylindrical shell with radius \(r_{\text{i}}\) and mass \(\rho(r_i) \text{d} V\) is considered, whose gas, according to Newton's shell theorem, is gravitationally accelerated radially inwards by the mass enclosed by the cylindrical shell. Due to symmetry, the problem is reduced to a one-dimensional, purely radial motion. The equation of motion to be solved is therefore:

\begin{align} \phantom{\Rightarrow}\;& \rho(r_{\text{i}}) \text{d} V \frac{\text{d}^2 r}{\text{d} t^2} = - \frac{2 G \lambda(r_{\text{i}}) \rho(r_{\text{i}}) \text{d} V}{r} \\[6pt] \Leftrightarrow\;& \frac{\text{d}^2 r}{\text{d} t^2} = - \frac{2 G \lambda(r_{\text{i}})}{r} \end{align}

Here, \(\lambda(r_{\text{i}})\) is the mass enclosed by the cylinder shell per unit length, which is given by the following equation for the given density distribution:

\begin{align} \phantom{\Rightarrow}\;& \lambda(r_{\text{i}}) = 2 \pi \int_{0}^{r_{\text{i}}} \rho(r) r \text{d} r \\[6pt] \Leftrightarrow\;& \lambda(r_{\text{i}}) = \frac{2 \lambda_{\text{total}}}{r_0^2} \int_{0}^{r_{\text{i}}} \frac{r}{\left(1 + \left(\frac{r}{r_0}\right)^2\right)^2} \text{d} r \\[6pt] \Leftrightarrow\;& \lambda(r_{\text{i}}) = \frac{r_{\text{i}}^2} {r_{\text{i}}^2 + r_0^2}\lambda_{\text{total}} \end{align}

Inserting yields:

\begin{align} \phantom{\Rightarrow}\;& \frac{\text{d}^2 r}{\text{d} t^2} = - \frac{2 G \lambda_{\text{total}}}{r} \frac{r_{\text{i}}^2}{r_{\text{i}}^2 + r_0^2} \, , \quad \text{def.:} \, K := 2 G \lambda_{\text{total}} \frac{r_{\text{i}}^2}{r_{\text{i}}^2 + r_0^2} \\[6pt] \Leftrightarrow\;& \frac{\text{d}^2 r}{\text{d} t^2} = - \frac{K}{r} \, , \quad \text{with} \ v = \frac{\text{d} r}{\text{d} t} \, , \, \frac{\text{d} v}{\text{d} t} = v \frac{\text{d} v}{\text{d} r} \\[6pt] \Leftrightarrow\;& v \frac{\text{d} v}{\text{d} r} = - \frac{K}{r} \\[6pt] \Leftrightarrow\;& v \text{d} v = - \frac{K}{r} \text{d} r \\[6pt] \Rightarrow\;& \frac{1}{2}v^2 = - K \ln(r) + C \end{align}

From the initial conditions \(r(t=0) = r_{\text{i}}\) and \(v(t=0) = 0\), the following applies to the integration constant:

\begin{align} C = K \ln(r_i) \end{align}

Thus follows:

\begin{align} \phantom{\Rightarrow}\;& \frac{1}{2}v^2 = - K \ln\left(\frac{r_{\text{i}}}{r}\right) \\[6pt] \Leftrightarrow\;& v = \pm \sqrt{2 K \ln\left(\frac{r_{\text{i}}}{r}\right)} \end{align}

Since the collapse is directed inwards, only the negative branch of the solution for the velocity is relevant, which is why only this branch will be considered in the following:

\begin{align} \phantom{\Rightarrow}\;& v = - \sqrt{2 K \ln\left(\frac{r_{\text{i}}}{r}\right)} \\[6pt] \Leftrightarrow\;& \frac{\text{d} r}{\text{d} t} = - \sqrt{2 K \ln\left(\frac{r_{\text{i}}}{r}\right)} \end{align}

By integrating this differential equation, the time \(t(r, r_i)\) required for a particle to fall from its starting position \(r_i\) to a radius \(r\), is obtained:

\begin{align} \phantom{\Rightarrow}\;& \int_{0}^{t} \text{d} t^\prime = - \int_{r_{\text{i}}}^{r} \frac{1}{\sqrt{2 K \ln\left(\frac{r_{\text{i}}}{r^\prime}\right)}} \text{d} r^\prime \\[6pt] \Leftrightarrow\;& t(r,r_i) = \sqrt{\frac{\pi}{2 K}} r_{\text{i}} \operatorname{erf}\left(\sqrt{\ln\left(\frac{r_i}{r}\right)}\right) \\[6pt] \Leftrightarrow\;& t(r,r_i) = \frac{1}{2} \sqrt{\frac{\pi\left(r_{\text{i}}^2 + r_0^2\right)}{G \lambda_{\text{total}}}} \operatorname{erf}\left(\sqrt{\ln\left(\frac{r_i}{r}\right)}\right) \end{align}

Here, \(\operatorname{erf}(x)\) is the Gaussian error function, which is defined as follows:

\begin{align} \operatorname{erf}(x) = \frac{2}{\sqrt{\pi}} \int_0^x e^{-t^2} \text{d} t \end{align}

The limit \(r \rightarrow 0\) then yields the free fall time \(t_{\text{ff}}(r_i)\) of a particle that is at its starting position at time \(t = 0\):

\begin{align} \phantom{\Rightarrow}\;& t_{\text{ff}}(r_i) = \lim\limits_{r \rightarrow 0} t(r,r_i) \, , \quad \text{with} \lim\limits_{x \rightarrow \infty} \operatorname{erf}(x) = 1 \\[6pt] \Leftrightarrow\;& t_{\text{ff}}(r_i) = \frac{1}{2} \sqrt{\frac{\pi\left(r_{\text{i}}^2 + r_0^2\right)}{G \lambda_{\text{total}}}} \end{align}

It follows that the free fall time does not approach zero when the starting position approaches zero, but converges to a finite limit value:

\begin{align} \lim_{r_i \rightarrow 0} t_{\text{ff}}(r_i) = \frac{r_0}{2}\sqrt{\frac{\pi}{G\lambda_{\text{total}}}} \end{align}

For arbitrarily small but finite starting positions \(r_i>0\), the free fall time cannot become arbitrarily small, but approaches this finite value.

Linear stability analysis

The aim of this section is to investigate how the hydrostatic equilibrium solution of an infinitely long, isothermal, self-gravitating gas cylinder behaves under perturbations. For the hydrodynamic reference case considered here, the results can be compared directly with the analysis of Nagasawa (1987). In the following, all equilibrium variables are denoted by the index \(0\):

\begin{align} \rho_0(r) = \frac{\lambda_{\text{total}}}{\pi r_0^2}\,\frac{1}{\left(1 + \left(\frac{r}{r_0}\right)^2\right)^2}\,, \quad \boldsymbol{v}_0 = \boldsymbol{0}\,, \quad \phi_{\text{ext},0}(r) = G\,\lambda_{\text{total}}\,\ln\!\left(1 + \left(\frac{r}{r_0}\right)^2\right) \end{align}

Here, \(\boldsymbol{v}_0\) denotes the velocity field of the equilibrium solution, which is exactly zero in hydrostatic equilibrium due to the stationary configuration.

Linearisation of the hydrodynamic equations

Considered is now a disturbance to the equilibrium solution. To this end, a dimensionless disturbance parameter \(\varepsilon\) is introduced, in which every hydrodynamic variable \(\mathcal{Q}\) with \(\mathcal{Q} \in \{\rho, \boldsymbol{v}, \phi_{\text{ext}}\}\) can be expanded:

\begin{align} \mathcal{Q}(r,\varphi,z,t) = \mathcal{Q}_0(r) + \sum_{j=1}^{\infty} \varepsilon^j \mathcal{Q}_j(r,\varphi,z,t) \end{align}

Here, \(\mathcal{Q}_0(r)\) denotes the respective equilibrium solution, and the terms \(\mathcal{Q}_j(r,\varphi,z,t)\) represent the \(j\)-th-order perturbations. In the following, only small perturbations are considered, i.e. \(\varepsilon \ll 1\). In this case, higher-order terms as linear in \varepsilon can be neglected, so that in a linear approximation the following applies:

\begin{align} \mathcal{Q}(r,z,t) = \mathcal{Q}_0(r) + \varepsilon \mathcal{Q}_1(r,\varphi,z,t) \end{align}

To investigate how these disturbances evolve over time, the perturbed variables are substituted into the underlying hydrodynamic equations of the system. This results in higher-order contributions in \(\varepsilon\) arising from the products of perturbed terms. Since \(\varepsilon \ll 1\) is assumed, these non-linear contributions can be neglected. The equations are thus linearised.

Substituting the perturbed solution into the continuity equation yields:

\begin{align} \phantom{\Rightarrow}\;& \partial_t \rho + \boldsymbol{\nabla} \cdot \left(\rho \boldsymbol{v}\right) = 0 \\[6pt] \Leftrightarrow\;& \partial_t \left(\rho_0 + \varepsilon \rho_1\right) + \boldsymbol{\nabla} \cdot \left(\left(\rho_0 + \varepsilon \rho_1\right) \varepsilon \boldsymbol{v}_1\right) = 0 \end{align}

At this point, the fact that the disturbance parameter \(\varepsilon\) is a constant scalar and the steady-state solution is time-invariant can be utilised. Furthermore, the flux term contains products of perturbation variables that give rise to second-order contributions in \(\varepsilon\). These terms can be neglected. It therefore follows that:

\begin{align} \phantom{\Rightarrow}\;& \varepsilon \partial_t \rho_1 + \varepsilon \boldsymbol{\nabla} \cdot \left(\rho_0 \boldsymbol{v}_1\right) = 0 \\[6pt] \Leftrightarrow\;& \partial_t \rho_1 + \boldsymbol{\nabla} \cdot \left(\rho_0 \boldsymbol{v}_1\right) = 0 \end{align}

The same steps are performed for the Euler equation:

\begin{align} \phantom{\Rightarrow}\;& \partial_t \boldsymbol{v} + \left(\boldsymbol{v} \cdot \boldsymbol{\nabla}\right) \boldsymbol{v} = - \frac{1}{\rho} \boldsymbol{\nabla} P - \boldsymbol{\nabla} \phi_{\text{ext}} \, , \quad \text{with} \; P = c_s^2 \rho \\[6pt] \Leftrightarrow\;& \partial_t \boldsymbol{v} + \left(\boldsymbol{v} \cdot \boldsymbol{\nabla}\right) \boldsymbol{v} = - \frac{c_s^2}{\rho} \boldsymbol{\nabla} \rho - \boldsymbol{\nabla} \phi_{\text{ext}} \\[6pt] \Leftrightarrow\;& \varepsilon \partial_t \boldsymbol{v}_1 = - \frac{c_s^2}{\rho_0 + \varepsilon \rho_1} \boldsymbol{\nabla} \left(\rho_0 + \varepsilon \rho_1\right) - \boldsymbol{\nabla} \left(\phi_{\text{ext},0} + \varepsilon \phi_{\text{ext},1}\right) \\[6pt] \Leftrightarrow\;& \varepsilon \partial_t \boldsymbol{v}_1 = - \frac{c_s^2}{\rho_0} \frac{1}{1 + \varepsilon \frac{\rho_1}{\rho_0}} \boldsymbol{\nabla} \left(\rho_0 + \varepsilon \rho_1\right) - \boldsymbol{\nabla} \left(\phi_{\text{ext},0} + \varepsilon \phi_{\text{ext},1}\right) \, , \quad \text{with} \; \frac{1}{1 + x} = 1 -x + \mathcal{O} \left(x^2\right) \\[6pt] \Rightarrow\;& \varepsilon \partial_t \boldsymbol{v}_1 = - \frac{c_s^2}{\rho_0}\left(1 - \varepsilon \frac{\rho_1}{\rho_0}\right) \boldsymbol{\nabla} \left(\rho_0 + \varepsilon \rho_1\right) - \boldsymbol{\nabla} \left(\phi_{\text{ext},0} + \varepsilon \phi_{\text{ext},1}\right) \\[6pt] \Rightarrow\;& \varepsilon \partial_t \boldsymbol{v}_1 = - \frac{c_s^2}{\rho_0}\left(\boldsymbol{\nabla} \rho_0 + \varepsilon \boldsymbol{\nabla} \rho_1 - \varepsilon \frac{\rho_1}{\rho_0} \boldsymbol{\nabla} \rho_0\right) - \boldsymbol{\nabla} \phi_{\text{ext},0} - \varepsilon \boldsymbol{\nabla} \phi_{\text{ext},1} \end{align}

Here, it can now be used that the following holds for the equilibrium solution:

\begin{align} \frac{c_s^2}{\rho_0} \boldsymbol{\nabla} \rho_0 = - \boldsymbol{\nabla} \phi_{\text{ext},0} \end{align}

This gives the linearised Euler equation:

\begin{align} \partial_t \boldsymbol{v}_1 = - c_s^2 \boldsymbol{\nabla} \left(\frac{\rho_1}{\rho_0}\right) - \boldsymbol{\nabla} \phi_{\text{ext},1} \end{align}

Substituting the perturbed solution into the Poisson equation yields:

\begin{align} \phantom{\Rightarrow}\;& \Delta \phi_{\text{ext}} = 4 \pi G \rho \\[6pt] \Leftrightarrow\;& \Delta \left(\phi_{\text{ext},0} + \varepsilon \phi_{\text{ext},1}\right) = 4 \pi G \left(\rho_0 + \varepsilon \rho_1\right) \end{align}

Here, too, it can be used that the following holds for the equilibrium solution:

\begin{align} \Delta \phi_{\text{ext},0} = 4 \pi G \rho_0 \end{align}

Thus, it follows for the linearised Poisson equation:

\begin{align} \Delta \phi_{\text{ext},1} = 4 \pi G \rho_1 \end{align}

Due to the cylindrical geometry of the problem, the resulting linearised equations can be further simplified. The continuity equation thus becomes:

\begin{align} \phantom{\Rightarrow}\;& \partial_t \rho_1 + \frac{1}{r} \partial_r \left(r \rho_0 v_{1,r}\right) + \frac{1}{r} \partial_\varphi \left(\rho_0 v_{1,\varphi}\right) + \partial_z \left(\rho_0 v_{1,z}\right) = 0 \\[6pt] \Leftrightarrow\;& \partial_t \rho_1 + \frac{1}{r} \partial_r \left(r \rho_0 v_{1,r}\right) + \frac{\rho_0}{r} \partial_\varphi v_{1,\varphi} + \rho_0 \partial_z v_{1,z} = 0 \end{align}

For the Euler equation, it is useful to use a separate representation for each component. The components are given by:

\begin{align} & \partial_t v_{1,r} + c_s^2 \partial_r \left(\frac{\rho_1}{\rho_0}\right) + \partial_r \phi_{\text{ext},1} = 0 \\[6pt] & \partial_t v_{1,\varphi} + \frac{c_s^2}{r \rho_0} \partial_\varphi \rho_1 + \frac{1}{r} \partial_\varphi \phi_{\text{ext},1} = 0 \\[6pt] & \partial_t v_{1,z} + \frac{c_s^2}{\rho_0} \partial_z \rho_1 + \partial_z \phi_{\text{ext},1} = 0 \end{align}

For the Poisson equation follows:

\begin{align} \frac{1}{r} \partial_r \left(r \partial_r \phi_{\text{ext},1}\right) + \frac{1}{r^2} \partial_\varphi^2 \phi_{\text{ext},1} + \partial_z^2 \phi_{\text{ext},1} = 4 \pi G \rho_1 \end{align}

Separation ansatz and radial boundary value problem

After linearization, the following separation ansatz can be chosen for the perturbation quantities:

\begin{align} \mathcal{Q}_1(r,\varphi,z,t) = \hat{\mathcal{Q}}(r)\exp\left(i m \varphi\right) \exp\left(i k z\right)\exp\left(\sigma t\right) \end{align}

This ansatz can be motivated as follows. To this end, it is convenient to collect the perturbation quantities into a vector \(\boldsymbol{w}\):

\begin{align} \boldsymbol{w} = \left(\rho_1, v_{1,r}, v_{1,\varphi}, v_{1,z}, \phi_{\text{ext},1}\right)^{\mathsf{T}} \end{align}

The coupled system of differential equations to be solved can then be written in compact form as follows:

\begin{align} \mathbf{M} \partial_t \boldsymbol{w} = \mathbf{L} \boldsymbol{w} \end{align}

Here, \(\mathbf{L}\) is the matrix representation of the linear operator that results from the linearized governing equations and contains derivatives with respect to \(r\), \(\varphi\), and \(z\):

\begin{align} \mathbf{L} = \begin{pmatrix} 0 & \frac{1}{r} \partial_r r \rho_0 & \frac{\rho_0}{r} \partial_\varphi & \rho_0 \partial_z & 0\\ c_s^2 \partial_r \frac{1}{\rho_0} & 0 & 0 & 0 & \partial_r\\ \frac{c_s^2}{r \rho_0} \partial_z & 0 & 0 & 0 & \frac{1}{r} \partial_\varphi\\ \frac{c_s^2}{\rho_0} \partial_z & 0 & 0 & 0 & \partial_z\\ 4 \pi G & 0 & 0 & 0 & - \frac{1}{r} \partial_r r \partial_r - \frac{1}{r^2} \partial_\varphi^2 - \partial_z^2 \end{pmatrix} \end{align}

Intuitively, \(\mathbf{L}\) collects the contributions from the continuity equation, the Euler equation, and the Poisson equation.

The matrix \(\mathbf{M}\) is constant and encodes which equations contain time derivatives, for example:

\begin{align} \mathbf{M} = \operatorname{diag}(1,1,1,1,0) \end{align}

In this representation, the Poisson equation would correspond to the fifth row of the system of equations. However, this assignment is not unique, but rather a matter of the chosen ordering of the variables in \(\boldsymbol{w}\) or of the equations in the operator \(\mathbf{L}\). The only essential point is that the assignment is kept consistent.

Since the linear operator has no explicit dependence on \(\varphi\), the linearized equations are rotationally invariant in \(\varphi\). This means that a rotation of a solution about the \(z\)-axis is again a solution of the same linear system. To show this, the rotation operator \(\mathcal{T}_{\varphi_0}\) is introduced, which is defined by:

\begin{align} \mathcal{T}_{\varphi_0} f(r,\varphi,z,t) := f(r,\varphi+\varphi_0,z,t) \end{align}

As mentioned above, the linearized equations are rotationally invariant in \(\varphi\) if a rotation of a solution about the \(z\)-axis is again a solution of the same linear system. This is equivalent to saying that both the time derivative and the linear operator commute with the rotation operator \(\mathcal{T}_{\varphi_0}\).

The time derivative commutes trivially with \(\mathcal{T}_{\varphi_0}\), since \(\partial_t\) does not act on the \(\varphi\)-coordinate and \(\mathcal{T}_{\varphi_0}\) does not change time. Moreover, for sufficiently smooth functions \(f\), the following relations hold:

\begin{align} \partial_\varphi\left(\mathcal{T}_{\varphi_0} f\right) = \mathcal{T}_{\varphi_0}\left(\partial_\varphi f\right) \qquad \text{and} \qquad \chi(r,z)\left(\mathcal{T}_{\varphi_0} f\right) = \mathcal{T}_{\varphi_0}\left(\chi(r,z) f\right) \end{align}

Here, \(\chi(r,z)\) is an arbitrary function that depends on \(r\) and \(z\). Since the entries of the linear operator consist of sums and products of such building blocks, and since each of them commutes with \(\mathcal{T}_{\varphi_0}\), it follows that \(\mathbf{L}\) also commutes with the rotation operator as a whole.

It turns out that Fourier modes \(\exp(i m \varphi)\) are a convenient choice for the \(\varphi\)-dependence. The reason is that these functions are eigenfunctions of the rotation operator:

\begin{align} \mathcal{T}_{\varphi_0}\exp(im\varphi) = \exp\left(im(\varphi+\varphi_0)\right) = \exp(im\varphi_0)\exp(im\varphi) \end{align}

Each mode \(\exp(i m \varphi)\) is therefore solely multiplied by a constant phase factor \(\exp(i m \varphi_0)\) under a rotation about the \(z\)-axis.

Since both the time derivative and the linear operator commute with the rotation operator, they possess a common set of eigenfunctions. As a result, the \(\varphi\)-dependence of the perturbation quantities can be expanded in Fourier modes. Moreover, it can be shown that different azimuthal mode numbers \(m\) do not couple to one another, so that each Fourier mode evolves independently. For the stability analysis, it is therefore sufficient to consider a single Fourier mode with fixed mode number \(m\).

From the \(2\pi\)-periodicity in \(\varphi\), it follows that the perturbation must be single-valued after one full rotation. Hence, it must hold that:

\begin{align} \mathcal{Q}_1(r,\varphi+2\pi,z,t) = \mathcal{Q}_1(r,\varphi,z,t) \end{align}

For the \(\varphi\)-dependence in the separation ansatz, this condition is satisfied if and only if the following holds:

\begin{align} \exp(im2\pi) = 1 \end{align}

It follows immediately that \(m \in \mathbb{Z}\) must hold.

Analogously to the Fourier decomposition in \(\varphi\), the \(z\)-dependence can also be decomposed into eigenfunctions of the translation operator along the \(z\)-axis. The \(z\)-dependence of the perturbation is therefore given by:

\begin{align} \mathcal{Q}_1(z) \propto \exp\left(ikz\right) \, , \quad \text{with} \; k \in \mathbb{R} \end{align}

Here, \(k\) is the axial wavenumber. In contrast to \(\varphi\), there is generally no periodicity condition in the \(z\)-direction. Therefore, the axial wavenumber is not restricted to discrete values, but can take any real value.

Analogously, the time dependence can also be decomposed into eigenfunctions of the time-evolution operator. The temporal evolution of the perturbation is therefore given by:

\begin{align} \mathcal{Q}_1(t) \propto \exp\left(\sigma t\right)\, , \quad \text{with} \; \sigma \in \mathbb{C} \end{align}

Here, \(\sigma\) is generally complex. The real part \(\Re(\sigma)\) describes the growth or damping rate of the perturbation. For \(\Re(\sigma) > 0\), the perturbation grows exponentially, and the system is unstable in this case. For \(\Re(\sigma) < 0\), the perturbation decays exponentially, and the system is therefore stable. The imaginary part \(\Im(\sigma)\) corresponds to an oscillation frequency, that is, for \(\Im(\sigma) \neq 0\), the evolution is additionally oscillatory. In the special case \(\Re(\sigma) = 0\), the amplitude remains constant, and the solution is purely oscillatory.

The radial part of the linearized equations does not have constant coefficients. As a result, the equations are not translationally invariant in \(r\). Consequently, plane waves in \(r\) are not eigenfunctions of the radial operator, and the radial structure of the perturbations must be determined explicitly.

By inserting the following separation ansatz for the perturbation quantities, one obtains a coupled system of ordinary differential equations in \(r\):

\begin{align} & \rho_1(r,\varphi,z,t) = \hat{\rho}(r)\exp\left(im\varphi\right)\exp\left(ikz\right)\exp\left(\sigma t\right) \\[6pt] & v_{1,r}(r,\varphi,z,t) = \hat{v}_r(r)\exp\left(im\varphi\right)\exp\left(ikz\right)\exp\left(\sigma t\right) \\[6pt] & v_{1,\varphi}(r,\varphi,z,t) = \hat{v}_\varphi(r)\exp\left(im\varphi\right)\exp\left(ikz\right)\exp\left(\sigma t\right) \\[6pt] & v_{1,z}(r,\varphi,z,t) = \hat{v}_z(r)\exp\left(im\varphi\right)\exp\left(ikz\right)\exp\left(\sigma t\right) \\[6pt] & \phi_{\text{ext},1}(r,\varphi,z,t) = \hat{\phi}_{\text{ext}}(r)\exp\left(im\varphi\right)\exp\left(ikz\right) \exp\left(\sigma t\right) \end{align}

Inserting the ansatz into the linearized continuity equation yields:

\begin{align} \sigma \hat{\rho} + \frac{1}{r} \partial_r \left(r \rho_0 \hat{v}_r\right) + \frac{i m \rho_0}{r} \hat{v}_\varphi + i k \rho_0 \hat{v}_z = 0 \end{align}

For the components of the Euler equation, inserting the ansatz yields:

\begin{align} & \sigma \hat{v}_r + c_s^2 \partial_r \left(\frac{\hat{\rho}}{\rho_0}\right) + \partial_r \hat{\phi}_{\text{ext}} = 0 \\[6pt] & \sigma \hat{v}_\varphi + \frac{i m c_s^2}{r} \frac{\hat{\rho}}{\rho_0} + \frac{i m}{r} \hat{\phi}_{\text{ext}} = 0 \\[6pt] & \sigma \hat{v}_z + i k c_s^2 \frac{\hat{\rho}}{\rho_0} + i k \hat{\phi}_{\text{ext}} = 0 \end{align}

For the components of the Euler equation, inserting the ansatz yields:

\begin{align} & \hat{v}_r = - \frac{1}{\sigma} \left(c_s^2 \partial_r \left(\frac{\hat{\rho}}{\rho_0}\right) + \partial_r \hat{\phi}_{\text{ext}}\right) \\[6pt] & \hat{v}_\varphi = - \frac{im}{\sigma r} \left(c_s^2 \frac{\hat{\rho}}{\rho_0} + \hat{\phi}_{\text{ext}}\right) \\[6pt] & \hat{v}_z = - \frac{ik}{\sigma} \left(c_s^2 \frac{\hat{\rho}}{\rho_0} + \hat{\phi}_{\text{ext}}\right) \end{align}

Finally, inserting the ansatz into the Poisson equation yields:

\begin{align} \frac{1}{r} \partial_r \left(r \partial_r \hat{\phi}_\text{ext}\right) - \left(\frac{m^2}{r^2} + k^2\right) \hat{\phi}_\text{ext} = 4 \pi G \hat{\rho} \end{align}

By substituting the velocity components obtained from the Euler equation into the continuity equation, the original system of five coupled differential equations can be reduced to a system of two coupled differential equations for \(\hat{\rho}(r)\) and \(\hat{\phi}_{\text{ext}}(r)\). The continuity equation then takes the form:

\begin{align} \frac{1}{r} \partial_r \left(r \rho_0 \left(c_s^2 \partial_r \left(\frac{\hat{\rho}}{\rho_0}\right) + \partial_r \hat{\phi}_\text{ext}\right)\right) = \sigma^2 \hat{\rho} + \rho_0 \left(\frac{m^2}{r^2} + k^2\right) \left(c_s^2 \frac{\hat{\rho}}{\rho_0} + \hat{\phi}_{\text{ext}}\right) \end{align}

To solve this system of coupled differential equations, suitable boundary conditions are required. Since the relevant dynamics are essentially determined by the region of the cylinder and the equilibrium solution is localized within a finite radial range around the characteristic radius \(r_0\), it is required that the perturbation vanishes at large radial distances. In the limit \(r \to \infty\), the perturbation quantities must therefore tend to zero:

\begin{align} \lim\limits_{r\to\infty}\hat{\rho}(r) = 0 = \lim\limits_{r\to\infty}\hat{\phi}_\text{ext}(r) \end{align}

The second boundary condition requires a distinction between two cases. In the case of an axisymmetric perturbation about the cylinder axis, that is, for \(m = 0\), rotational symmetry together with the requirement of a smooth and regular solution implies, analogously to the derivation of the analytical equilibrium solution of an infinitely long, isothermal, self-gravitating gas cylinder, that the perturbation quantities must not have gradients at the origin. In particular, the radial derivatives must therefore vanish at the center:

\begin{align} \partial_r \hat{\rho}(r) \big|_{r = 0} = 0 = \partial_r \hat{\phi}_\text{ext}(r) \big|_{r = 0} \end{align}

For non-axisymmetric azimuthal perturbations, that is, for \(m \neq 0\), the perturbation quantities, by contrast, exhibit an explicit angular dependence. On the cylinder axis, however, the angular coordinate loses its geometric meaning, since all angular directions correspond to the same point there. If the amplitudes \(\hat{\rho}(0)\) and \(\hat{\phi}_{\text{ext}}(0)\) were nonzero, the perturbation quantities would formally depend on \(\varphi\) on the axis and would therefore no longer represent a uniquely defined function. To ensure the uniqueness of the solution on the cylinder axis, the amplitudes of non-axisymmetric perturbations must vanish there:

\begin{align} \hat{\rho}(r=0) = 0 = \hat{\phi}_\text{ext}(r=0) \end{align}

It is convenient to introduce some dimensionless quantities that further simplify the system of differential equations. A natural choice is to nondimensionalize the perturbation quantities as follows:

\begin{align} u := \frac{\hat{\rho}}{\rho_c} \quad \text{and} \quad \psi := \frac{\hat{\phi}_\text{ext}}{c_s^2} \end{align}

Furthermore, by introducing the dimensionless radial coordinate \(x := \frac{r}{r_0}\), the density profile of the equilibrium solution can be written in compact form as:

\begin{align} \rho_0(x) = \rho_c q(x) \, , \quad \text{with} \; q(x) := \frac{1}{\left(1 + x^2\right)^2} \end{align}

Moreover, the dimensionless parameters \(\bar{k}\) and \(\bar{\sigma}\) can be introduced:

\begin{align} \bar{k} := k r_0 \quad \text{and} \quad \bar{\sigma} := \sigma \frac{r_0}{c_s} \end{align}

Substituting into the continuity equation yields:

\begin{align} \phantom{\Rightarrow}\;& \frac{\rho_c c_s^2}{r_0^2} \frac{1}{x} \partial_x \left(x q \left(\partial_x \left(\frac{u}{q}\right) + \partial_x \psi\right)\right) = \sigma^2 \rho_c u + \frac{\rho_c c_s^2}{r_0^2} q \left(\frac{m^2}{x^2} + \frac{k^2}{r_0^2}\right) \left(\frac{u}{q} + \psi\right) \\[6pt] \Leftrightarrow\;& \frac{1}{x} \partial_x \left(x q \left(\partial_x \left(\frac{u}{q}\right) + \partial_x \psi\right)\right) = \bar{\sigma}^2 u + q \left(\frac{m^2}{x^2} + \frac{k^2}{r_0^2}\right) \left(\frac{u}{q} + \psi\right) \\[6pt] \Leftrightarrow\;& \frac{1}{x} \partial_x \left(x \left(\partial_x u - \frac{\partial_x q}{q} u + q \partial_x \psi\right)\right) = \bar{\sigma}^2 u + \left(\frac{m^2}{x^2} + \bar{k}^2\right) \left(u + q \psi\right) \end{align}

An analogous procedure yields for the Poisson equation:

\begin{align} \phantom{\Rightarrow}\;& \frac{c_s^2}{r_0^2} \frac{1}{x} \partial_x \left(x \partial_x \psi\right) - \frac{c_s^2}{r_0^2} \left(\frac{m^2}{x^2} + \frac{k^2}{r_0^2}\right) \psi = 4 \pi G \rho_c u \\[6pt] \Leftrightarrow\;& \frac{1}{x} \partial_x \left(x \partial_x \psi\right) - \left(\frac{m^2}{x^2} + \bar{k}^2\right) \psi = \frac{4 \pi G \rho_c r_0^2}{c_s^2} u \end{align}

Using the relations introduced above for \(a\) and \(\rho_c\), it follows that:

\begin{align} \frac{1}{x} \partial_x \left(x \partial_x \psi\right) - \left(\frac{m^2}{x^2} + \bar{k}^2\right) \psi = 8 u \end{align}

Since \(u\) and \(\psi\) are essentially rescaled forms of the density and potential perturbations, respectively, the boundary conditions for the perturbation quantities carry over directly to \(u\) and \(\psi\). The same boundary conditions therefore apply. For \(x \to \infty\), it thus follows that:

\begin{align} \lim\limits_{x\to\infty}u(x) = 0 = \lim\limits_{x\to\infty}\psi(x) \end{align}

On the cylinder axis, that is, for \(x = 0\), one must still distinguish between the cases \(m = 0\) and \(m \neq 0\). Accordingly, the following holds there:

\begin{align} &\partial_x u(x) \big|_{x = 0} = 0 = \partial_x \psi(x) \big|_{x = 0} \qquad \text{for } m=0 \\[6pt] &u(0)=0=\psi(0) \qquad \text{for } m\neq 0 \end{align}

It turns out that this system of coupled differential equations cannot, in general, be solved analytically, so that a numerical solution approach is used in the following. For the later implementation, it must be noted that \(\bar{\sigma}^2 \in \mathbb{R}\) must hold. This follows from the fact that the perturbation quantities under consideration must be real for physical reasons. In the present form of the system of differential equations, this is guaranteed only if \(\bar{\sigma}^2\) is real. In the course of the numerical solution, however, complex values \(\bar{\sigma}^2 \in \mathbb{C}\) may also arise due to the mathematical structure of the equations to be solved. Such solutions must, however, be interpreted as unphysical and therefore discarded in the physical interpretation.

Numerical formulation as a generalized eigenvalue problem

For the numerical treatment of the problem, it is convenient to introduce the quantity \(\alpha(x)\), defined by:

\begin{align} \alpha(x) := - \frac{\partial_x q(x)}{q(x)} \end{align}

Using the explicit form of \(q(x)\), this immediately yields:

\begin{align} \alpha(x) = \frac{4x}{1 + x^2} \end{align}

Moreover, it is convenient to introduce the quantity \(P(x)\), defined by:

\begin{align} P(x) := \frac{m^2}{x^2} + \bar{k}^2 \end{align}

Furthermore, it is convenient to introduce the following quantities:

\begin{align} &F(x) = \partial_x u(x) + \alpha(x) u(x) + q(x)\,\partial_x \psi(x) \\[6pt] &G(x) = \partial_x \psi(x) \end{align}

The equations to be solved then take the form:

\begin{align} &\frac{1}{x} \partial_x \left(x F\right) - P \left(u + q \psi\right) = \bar{\sigma}^2 u \\[6pt] &\frac{1}{x} \partial_x \left(x G\right) - P \psi = 8u \end{align}

To solve the problem numerically, the equations must be discretized. For this purpose, the interval \(\left[0, x_{\max}\right]\) is divided into \(N\) equidistant subintervals. The corresponding \(N+1\) grid points are given by:

\begin{align} x_j = j\,\Delta x \, , \quad \text{with} \quad \Delta x = \frac{x_{\max}}{N} \, , \quad j \in \left\{0,\ldots,N\right\} \end{align}

In the following, the quantities under consideration, evaluated at the respective grid points, are written as follows:

\begin{align} u_j = u\left(x_j\right) \, , \quad \psi_j = \psi\left(x_j\right) \, , \quad q_j = q\left(x_j\right) \, , \quad \alpha_j = \alpha(x_j) \, , \quad P_j = P(x_j) \end{align}

For the interior grid points \(j \in \{1,\ldots,N-1\}\), the discretized equations follow directly from the continuous equations. The discretized continuity equation is given by:

\begin{align} \frac{1}{x_j} \left(\partial_x \left(x F\right)\right)_j - P_j \left(u_j + q_j \psi_j\right) = \bar{\sigma}^2 u_j \end{align}

The discretized Poisson equation is given by:

\begin{align} \frac{1}{x_j} \left(\partial_x \left(x G\right)\right)_j - P_j \psi_j = 8 u_j \end{align}

Since the problem reduces to a purely radial one after the exponential ansatz and operators in divergence form appear in cylindrical coordinates, a finite-volume discretization is a natural choice. For a function \(f(x)\), the derivative at the \(j\)-th grid point can be written in flux form in terms of the cell faces at \(x_{j\pm\frac12}\) as follows:

\begin{align} \left(\partial_x f\left(x\right)\right)_j = \frac{f_{j + \frac{1}{2}} - f_{j - \frac{1}{2}}}{\Delta x} \end{align}

This yields the following expression for the derivative term in the discretized continuity equation:

\begin{align} \left(\partial_x \left(xF\right)\right)_j = \frac{1}{\Delta x}\left(x_{j+\frac12} F_{j+\frac12} - x_{j-\frac12} F_{j-\frac12}\right) \end{align}

In particular, the following holds:

\begin{align} &F_{j+\frac12} = \left(\partial_x u\right)_{j+\frac12} + \alpha_{j+\frac12} u_{j+\frac12} + q_{j+\frac12}\left(\partial_x \psi\right)_{j+\frac12} \\[6pt] &F_{j-\frac12} = \left(\partial_x u\right)_{j-\frac12} + \alpha_{j-\frac12} u_{j-\frac12} + q_{j-\frac12}\left(\partial_x \psi\right)_{j-\frac12} \end{align}

The quantities at the indices \(j \pm \frac{1}{2}\) are obtained from averages of the neighboring grid points. For equidistant grid points, this therefore gives:

\begin{align} f_{j\pm\frac12} = \frac{f_j + f_{j\pm1}}{2} \end{align}

The derivatives at the indices \(j \pm \frac{1}{2}\) are given by the corresponding difference quotients:

\begin{align} \left(\partial_x f\right)_{j+\frac12} = \frac{f_{j+1}-f_j}{\Delta x} \qquad \text{and} \qquad \left(\partial_x f\right)_{j-\frac12} = \frac{f_j-f_{j-1}}{\Delta x} \end{align}

Substituting these definitions into \(F_{j\pm\frac12}\) and collecting terms yields:

\begin{align} &F_{j+\frac12} = \left(\frac{\alpha_{j+\frac12}}{2} + \frac{1}{\Delta x}\right) u_{j+1} + \left(\frac{\alpha_{j+\frac12}}{2} - \frac{1}{\Delta x}\right) u_j + \frac{q_{j+\frac12}}{\Delta x}\psi_{j+1} - \frac{q_{j+\frac12}}{\Delta x}\psi_j \\[6pt] &F_{j-\frac12} = \left(\frac{\alpha_{j-\frac12}}{2} + \frac{1}{\Delta x}\right) u_j + \left(\frac{\alpha_{j-\frac12}}{2} - \frac{1}{\Delta x}\right) u_{j-1} + \frac{q_{j-\frac12}}{\Delta x}\psi_j - \frac{q_{j-\frac12}}{\Delta x}\psi_{j-1} \end{align}

The discretized continuity equation can thus be written in the following form:

\begin{align} C_{j,-}^{(C)} u_{j-1} + C_{j,0}^{(C)} u_j + C_{j,+}^{(C)} u_{j+1} + D_{j,-}^{(C)} \psi_{j-1} + D_{j,0}^{(C)} \psi_j + D_{j,+}^{(C)} \psi_{j+1} = \bar{\sigma}^2 u_j \end{align}

The coefficients are given by:

\begin{align} C_{j,-}^{(C)} &:= \frac{x_{j-\frac12}}{\Delta x\, x_j}\left(\frac{1}{\Delta x} - \frac{\alpha_{j-\frac12}}{2}\right) \\[6pt] C_{j,0}^{(C)} &:= \frac{x_{j+\frac12}}{\Delta x\, x_j}\left(-\frac{1}{\Delta x} + \frac{\alpha_{j+\frac12}}{2}\right) - \frac{x_{j-\frac12}}{\Delta x\, x_j}\left(\frac{1}{\Delta x} + \frac{\alpha_{j-\frac12}}{2}\right) - P_j \\[6pt] C_{j,+}^{(C)} &:= \frac{x_{j+\frac12}}{\Delta x\, x_j}\left(\frac{1}{\Delta x} - \frac{\alpha_{j+\frac12}}{2}\right) \\[6pt] D_{j,-}^{(C)} &:= \frac{x_{j-\frac12} q_{j-\frac12}}{\Delta x^2 x_j} \\[6pt] D_{j,0}^{(C)} &:= -\frac{x_{j+\frac12} q_{j+\frac12}}{\Delta x^2 x_j} - \frac{x_{j-\frac12} q_{j-\frac12}}{\Delta x^2 x_j} - P_j q_j \\[6pt] D_{j,+}^{(C)} &:= \frac{x_{j+\frac12} q_{j+\frac12}}{\Delta x^2 x_j} \end{align}

Analogously, the following expression is obtained for the derivative term in the discretized Poisson equation:

\begin{align} \left(\partial_x \left(xG\right)\right)_j = \frac{1}{\Delta x}\left(x_{j+\frac12} G_{j+\frac12} - x_{j-\frac12} G_{j-\frac12}\right) \end{align}

After collecting terms, \(G_{j\pm\frac12}\) is given by:

\begin{align} &G_{j+\frac12} = \frac{\psi_{j+1}}{\Delta x} - \frac{\psi_{j}}{\Delta x} \\[6pt] &G_{j-\frac12} = \frac{\psi_{j}}{\Delta x} - \frac{\psi_{j-1}}{\Delta x} \end{align}

The discretized Poisson equation can thus be written in the following form:

\begin{align} C_{j,0}^{(P)} u_j + D_{j,-}^{(P)} \psi_{j-1} + D_{j,0}^{(P)} \psi_j + D_{j,+}^{(P)} \psi_{j+1} = 0 \end{align}

The coefficients are then given by:

\begin{align} C_{j,0}^{(P)} &:= -8 \\[6pt] D_{j,-}^{(P)} &:= \frac{x_{j-\frac12}}{\Delta x^2 x_j} \\[6pt] D_{j,0}^{(P)} &:= -\frac{x_{j+\frac12} + x_{j-\frac12}}{\Delta x^2 x_j} - P_j \\[6pt] D_{j,+}^{(P)} &:= \frac{x_{j+\frac12}}{\Delta x^2 x_j} \end{align}

The grid points at the boundaries are determined by the boundary conditions. At the outer boundary, \(x = x_{\max}\), the discretized boundary condition is given by:

\begin{align} u_N = 0 = \psi_N \end{align}

At the inner boundary, \(x = 0\), a distinction must be made between the cases \(m = 0\) and \(m \neq 0\). For \(m = 0\), the following holds:

\begin{align} \left(\partial_x u\right)_0 = 0 = \left(\partial_x \psi\right)_0 \end{align}

By definition, the derivative at the grid point with index \(j = 0\) is given by:

\begin{align} \phantom{\Rightarrow}\;& \left(\partial_x u\right)_0 = \frac{u_{\frac12} - u_{-\frac12}}{\Delta x} \\[6pt] \Leftrightarrow\;& \left(\partial_x u\right)_0 = \frac{u_1 - u_{-1}}{2 \Delta x} \end{align}

At this point, a term \(u_{-1}\) appears. However, a grid point with index \(j = -1\) does not exist on the grid under consideration. The same problem arises analogously for the dimensionless potential perturbation. Therefore, the derivative at the boundary grid point \(j = 0\) must be treated separately.

Since the approximation of the derivative used here has an error of order \(\mathcal{O}(\Delta x^2)\), it is convenient to replace the derivative at the boundary by an approximation of the same order. In the present case, this can only be achieved by means of a second-order forward difference approximation, since no grid point with index \(j = -1\) is available. The second-order forward difference approximation is given by:

\begin{align} \left(\partial_x u\right)_0 = \frac{-3 u_0 + 4 u_1 - u_2}{2 \Delta x} \end{align}

Thus, for the case \(m = 0\), the boundary condition on the cylinder axis can be approximated in the discretization as follows:

\begin{align} -3 u_0 + 4 u_1 - u_2 = 0 = -3 \psi_0 + 4 \psi_1 - \psi_2 \end{align}

For the case \(m \neq 0\), by contrast, the discretized boundary condition on the cylinder axis is given by:

\begin{align} u_0 = 0 = \psi_0 \end{align}

The problem can thus be formulated as a coupled system with originally \(2N + 2\) unknowns. However, since the boundary conditions at the outer boundary are already prescribed, namely \(u_N = 0\) and \(\psi_N = 0\), these two unknowns no longer need to be solved for. The resulting system is therefore reduced to \(2N\) unknowns, two equations for the boundary conditions at the origin, and \(2N - 2\) equations for the interior grid points \(j \in \{1,\ldots,N-1\}\).

This system can be written in matrix form as a generalized eigenvalue problem:

\begin{align} \mathbf{A}(\bar{k})\boldsymbol{y} = \bar{\sigma}^2\mathbf{B}\boldsymbol{y} \end{align}

Here, \(\mathbf{A}, \mathbf{B} \in \mathbb{R}^{2N \times 2N}\). The matrix \(\mathbf{A}\) contains all terms that are independent of \(\bar{\sigma}^2\), whereas \(\mathbf{B}\) contains all contributions multiplied by \(\bar{\sigma}^2\). The vector \(\boldsymbol{y}\) contains all unknowns and is given by:

\begin{align} \boldsymbol{y} = \left(u_0, u_1, \ldots, u_{N-1}, \psi_0, \psi_1, \ldots, \psi_{N-1}\right)^\mathsf{T} \in \mathbb{R}^{2N} \end{align}

The equations are arranged such that the first two rows describe the boundary conditions at the origin, while the subsequent rows contain the discretized equations at the interior grid points. Accordingly, all entries in the first two rows of the matrix \(\mathbf{A}\) are zero, except for those acting on \(u_0\), \(u_1\), and \(u_2\), and on \(\psi_0\), \(\psi_1\), and \(\psi_2\), respectively. These matrix elements encode the corresponding boundary conditions.

For \(m = 0\), the corresponding nonzero matrix elements are given by

\begin{align} & A_{0,0} = -3 \, , \qquad A_{0,1} = 4 \, , \qquad A_{0,2} = -1 \\[6pt] & A_{1,N} = -3 \, , \qquad A_{1,N+1} = 4 \, , \qquad A_{1,N+2} = -1 \end{align}

For the case \(m \neq 0\), by contrast, only those matrix elements acting on \(u_0\) and \(\psi_0\), respectively, are nonzero. In that case, the following holds:

\begin{align} A_{0,0} = 1 \quad \text{and} \quad A_{1,N} = 1 \end{align}

The rows \(r = j + 1\) with \(j \in \{1,\ldots,N-1\}\) are chosen such that they represent the discretized dimensionless continuity equation. The nonzero entries in these rows acting on the \(u\)-block are given by:

\begin{align} A_{j+1,j-1} = C_{j,-}^{(C)} \, , \quad A_{j+1,j} = C_{j,0}^{(C)} \, , \quad A_{j+1,j+1} = C_{j,+}^{(C)} \end{align}

This form formally applies to all \(j \in \{1,\ldots,N-1\}\). However, for the boundary case \(j = N-1\), the index \(j+1 = N\) would appear, which would refer to \(u_N\). Since \(u_N\), just like \(\psi_N\), has already been eliminated by the boundary condition and is therefore not contained in \(\boldsymbol{y}\), this contribution is not treated as an unknown and must accordingly be handled separately.

Analogously, the nonzero entries that act on the \(\psi\)-block in the continuity equation are given by:

\begin{align} A_{j+1,N+j-1} = D_{j,-}^{(C)} \, , \quad A_{j+1,N+j} = D_{j,0}^{(C)} \, , \quad A_{j+1,N+j+1} = D_{j,+}^{(C)} \end{align}

Here as well, the boundary case \(j = N-1\) must be treated separately, since \(N + j + 1 = 2N\) would refer to \(\psi_N\), which has already been eliminated by the boundary condition.

The rows \(r = N + j\) with \(j \in \{1,\ldots,N-1\}\) describe the discretized Poisson equation. The nonzero entries acting on the \(u\)-block are the following:

\begin{align} A_{N+j,j} = C_{j,0}^{(P)} \end{align}

The nonzero entries acting on the \(\psi\)-block are given by:

\begin{align} A_{N+j,N+j-1} = D_{j,-}^{(P)} \, , \quad A_{N+j,N+j} = D_{j,0}^{(P)} \, , \quad A_{N+j,N+j+1} = D_{j,+}^{(P)} \end{align}

In the Poisson equation as well, the boundary case \(j = N-1\) must be treated analogously to the continuity equation.

In the matrix \(\mathbf{B}\), the entries in the first two rows are zero. This is because \(\mathbf{B}\) contains only terms multiplied by \(\bar{\sigma}^2\), while the boundary conditions at the origin do not contain any contributions involving \(\bar{\sigma}^2\).

For the rows \(r = j+1\) with \(j \in \{1,\ldots,N-1\}\), which represent the dimensionless continuity equation, the only nonzero entries are given by:

\begin{align} B_{j+1,j} = 1 \end{align}

Since the dimensionless Poisson equation does not contain any terms depending on \(\bar{\sigma}^2\), all entries in the rows \(r = N + j\) representing the Poisson equation are zero.

This generalized eigenvalue problem can now be solved numerically. In the present work, the Python library SciPy was used for this purpose, as it provides routines for solving generalized eigenvalue problems. Of particular interest are the eigenvalues, since they determine the linear temporal evolution of the perturbations.

Structure of the numerical spectrum

For a fixed pair \((m,\bar{k})\), the linear stability analysis reduces to an eigenvalue problem in the dimensionless radial coordinate \(x\). In general, this problem possesses several eigenvalues \(\bar{\sigma}_n^2\) with corresponding eigenfunctions \(u_n(x)\) and \(\psi_n(x)\), respectively. Before interpreting individual parts of this spectrum physically or comparing different pairs \((m,\bar{k})\) with one another, it is first convenient to examine the structure of the entire numerically obtained spectrum for a specific example.

In the following, the spectrum of eigenvalues for the fixed pair \((m,\bar{k}) = (0,1)\) is considered as an illustrative example. The main purpose is to investigate the qualitative structure of the numerically determined spectrum. For this purpose, the numerical solution of the given equations is first carried out on a computational domain with \(x_{\max} = 25\) using \(N = 500\) grid points.

For the illustrative pair \((m,\bar{k}) = (0,1)\), the numerical solution of the generalized eigenvalue problem yields a discrete spectrum of eigenvalues \(\bar{\sigma}_n^2\). A characteristic structure of the spectrum becomes apparent. Within the investigated numerical setup, only one eigenvalue is found above the threshold \(-\bar{k}^2\), that is, satisfying the condition \(\bar{\sigma}^2 > -\bar{k}^2\). The remaining numerically determined eigenvalues lie below this threshold. The following figure shows the corresponding numerically determined spectrum for \((m,\bar{k}) = (0,1)\). The dashed horizontal line marks the threshold \(-\bar{k}^2\). The inset shows the last ten eigenvalues of the spectrum and illustrates that only a single eigenvalue lies above this threshold, while all remaining eigenvalues stay below it.

This observation is not restricted to the pair \((m,\bar{k}) = (0,1)\), but can also be found for other numerically investigated combinations of azimuthal mode number \(m\) and dimensionless wavenumber \(\bar{k}\). Numerically, it is also observed that the eigenvalues approach the threshold \(-\bar{k}^2\). Whether this threshold actually constitutes an accumulation point of the spectrum is not proved here, however. For the arguments and observations presented in the following, this is also not necessary.

This numerically observed structure of the spectrum is not merely an isolated feature of the discretized problem, but can be explained from the structure of the underlying boundary value problem. The generalized eigenvalue problem is formulated on the semi-infinite interval \(x \in [0,\infty)\) and is therefore determined to a large extent by the behavior of the solutions in the two boundary regions. A physically admissible solution must satisfy both the boundary condition at \(x = 0\) and the one at \(x \to \infty\). It is therefore natural to examine the coupled differential equations more closely precisely in these two limiting regions. In particular, it turns out that the threshold \(-\bar{k}^2\) observed in the numerical spectrum arises naturally from the behavior of the equations at large radii.

Asymptotic behavior of the solutions

In the following, the local behavior of the solutions at \(x = 0\) is first examined, followed by the asymptotic behavior for \(x \to \infty\). On this basis, it then becomes possible to understand why the numerically determined spectrum for a fixed pair \((m,\bar{k})\) exhibits the characteristic structure observed above. This analysis is used to interpret the structure of the numerically obtained spectrum. It is not intended as a mathematical proof.

To investigate the local behavior of the solutions at \(x = 0\), it is convenient to consider the continuity equation in the following form:

\begin{align} \frac{1}{x}\partial_x \left( x q \left(\partial_x \left(\frac{u}{q}\right) + \partial_x \psi \right)\right) = \bar{\sigma}^2 u + q\left(\frac{m^2}{x^2} + \bar{k}^2\right)\left(\frac{u}{q} + \psi\right) \end{align}

For small \(x\), \(q(x)\) can be expanded about \(x = 0\). This gives:

\begin{align} q(x)=1+\mathcal{O}(x^2) \end{align}

In particular, this implies the following asymptotic behavior as \(x \to 0\):

\begin{align} q(x)\sim 1 \end{align}

Thus, for small \(x\), the continuity equation asymptotically reduces to:

\begin{align} \frac{1}{x}\partial_x \left( x \left(\partial_x u + \partial_x \psi \right)\right) = \bar{\sigma}^2 u + \left(\frac{m^2}{x^2}+\bar{k}^2\right)(u+\psi) \end{align}

Since the coupled differential equations contain terms of the form \(\frac{1}{x}\) and \(\frac{1}{x^2}\) at the origin, \(x = 0\) is a singular point of the system. To determine the local behavior of the solutions near this point, a Frobenius ansatz is used in the following. To this end, the local solutions near \(x = 0\) are assumed to be of the form:

\begin{align} u(x)=x^\nu \sum_{n=0}^{\infty} a_n x^n \quad \text{and} \quad \psi(x)=x^\mu \sum_{n=0}^{\infty} b_n x^n \end{align}

Here, \(a_0 \neq 0\) and \(b_0 \neq 0\), while the exponents \(\nu\) and \(\mu\), respectively, are to be determined from the differential equations.

Since the asymptotic behavior as \(x \to 0\) is of interest here, it is sufficient to consider only the dominant term of each ansatz. Thus, asymptotically,

\begin{align} u(x)\sim a_0 x^\nu \quad \text{and} \quad \psi(x)\sim b_0 x^\mu \end{align}

Substituting into the continuity equation and subsequently collecting terms according to powers of \(x\) yields:

\begin{align} a_0\left(\nu^2-m^2\right)x^{\nu-2} + b_0\left(\mu^2-m^2\right)x^{\mu-2} - a_0\left(\bar{\sigma}^2+\bar{k}^2\right)x^\nu - b_0\left(\bar{\sigma}^2+\bar{k}^2\right)x^\mu =0 \end{align}

As \(x \to 0\), the terms with the smaller exponents dominate over those with the larger exponents. Therefore, in the leading-order behavior, the coefficients of the dominant terms must vanish. It follows that:

\begin{align} \nu=\pm |m| \quad \text{and} \quad \mu=\pm |m| \end{align}

Since the underlying differential equations are linear, the general local solution is given by a superposition of the corresponding branches. Thus, asymptotically as \(x \to 0\),

\begin{align} u(x)\sim a_{0,+}x^{|m|}+a_{0,-}x^{-|m|} \quad \text{and} \quad \psi(x)\sim b_{0,+}x^{|m|}+b_{0,-}x^{-|m|} \end{align}

The branches with negative exponents diverge as \(x \to 0\) and are therefore incompatible with the boundary conditions at the origin. Consequently, the coefficients of the diverging solutions must vanish:

\begin{align} a_{0,-} = 0 = b_{0,-} \end{align}

This yields the asymptotic solutions near \(x = 0\):

\begin{align} u(x)\sim a_{0,+}x^{|m|} \quad \text{and} \quad \psi(x)\sim b_{0,+}x^{|m|} \end{align}

To investigate the asymptotic behavior of the solutions as \(x \to \infty\), it is convenient to first consider the Poisson equation. This is an inhomogeneous linear differential equation. Its general solution \(\psi(x)\) therefore consists of the solution of the associated homogeneous differential equation \(\psi_{\text{h}}(x)\) and a particular solution of the inhomogeneous equation \(\psi_{\text{p}}(x)\):

\begin{align} \psi(x) = \psi_{\text{h}}(x) + \psi_{\text{p}}(x) \end{align}

The associated homogeneous Poisson equation is given by:

\begin{align} \phantom{\Leftrightarrow}\;& \frac{1}{x} \partial_x \left(x \partial_x \psi_{\text{h}}\right) - \left(\frac{m^2}{x^2} + \bar{k}^2\right) \psi_{\text{h}} = 0 \\[6pt] \Leftrightarrow\;& \partial_x^2 \psi_{\text{h}} + \frac{1}{x} \partial_x \psi_{\text{h}} - \left(\frac{m^2}{x^2} + \bar{k}^2\right) \psi_{\text{h}} = 0 \end{align}

With the substitution \(z = \bar{k}x\), this equation can be transformed into the form of the modified Bessel equation:

\begin{align} z^2 \partial_z^2 \psi_{\text{h}} + z \partial_z \psi_{\text{h}} - \left(m^2 + z^2\right)\psi_{\text{h}} = 0 \end{align}

The general solution of this equation is given by the modified Bessel functions:

\begin{align} \psi_{\text{h}}(x) = b_{\text{h},+} I_m(\bar{k}x) + b_{\text{h},-} K_m(\bar{k}x) \end{align}

For \(x \to \infty\), this yields the following asymptotic behavior:

\begin{align} \psi_{\text{h}}(x) \sim b_{\text{h},+} x^{-\frac12} \exp(\bar{k}x) + b_{\text{h},-} x^{-\frac12} \exp(-\bar{k}x) \end{align}

Since the perturbations must vanish in the limit \(x \to \infty\), the exponentially growing branch of the homogeneous solution is not admissible. Consequently, the following must hold:

\begin{align} b_{\text{h},+}=0 \end{align}

The asymptotic behavior of the homogeneous solution of the Poisson equation is therefore given by:

\begin{align} \psi_{\text{h}}(x)\sim x^{-\frac12} \exp(-\bar{k}x) \end{align}

To determine the asymptotic behavior of the particular solution \(\psi_{\text{p}}(x)\), the asymptotic behavior of \(u(x)\) is also required, since, up to a constant prefactor, the inhomogeneity of the Poisson equation is precisely given by \(u(x)\). To this end, it is first necessary to examine the asymptotic behavior of the \(x\)-dependent prefactors in the differential equations. For large \(x\), these can be expanded as follows:

\begin{align} q(x)=x^{-4}+\mathcal{O}(x^{-6}) \qquad \text{and} \qquad \frac{\partial_x q(x)}{q(x)}=-4x^{-1}+\mathcal{O}(x^{-3}) \end{align}

In particular, this implies the following asymptotic behavior as \(x\to\infty\):

\begin{align} q(x)\sim x^{-4} \qquad \text{and} \qquad \frac{\partial_x q(x)}{q(x)}\sim -4x^{-1} \end{align}

Thus, the continuity equation is asymptotically given by:

\begin{align} \phantom{\Leftrightarrow}\;& \frac{1}{x}\partial_x\left(x\left(\partial_x u+\frac{4}{x}u+\frac{1}{x^4}\partial_x\psi\right)\right) = \bar{\sigma}^2 u+\left(\frac{m^2}{x^2}+\bar{k}^2\right)\left(u+\frac{\psi}{x^4}\right) \\[6pt] \Leftrightarrow\;& \partial_x^2 u+\frac{5}{x}\partial_x u-\left(\bar{\sigma}^2+\frac{m^2}{x^2}+\bar{k}^2\right)u = \frac{1}{x^4}\partial_x^2\psi-\frac{3}{x^5}\partial_x\psi+\left(\frac{m^2}{x^6}+\frac{\bar{k}^2}{x^4}\right)\psi. \end{align}

Formally, the \(\psi\)-terms appearing on the right-hand side can be regarded as an inhomogeneity of the differential equation for \(u(x)\). Since \(\psi(x)\), however, is itself an unknown function coupled to \(u(x)\), these terms are more precisely interpreted as coupling terms. If the \(\psi\)-terms are formally regarded as an inhomogeneity, the associated homogeneous continuity equation is given by:

\begin{align} \partial_x^2 u_{\text{h}}+\frac{5}{x}\partial_x u_{\text{h}}-\left(\bar{\sigma}^2+\frac{m^2}{x^2}+\bar{k}^2\right)u_{\text{h}}=0 \end{align}

Since for \(\bar{\sigma}^2 \neq -\bar{k}^2\) both terms proportional to \(x^{-1}\) and \(x^{-2}\), as well as constant contributions, are present, it is natural in this case to use the following ansatz for the asymptotic solution of the differential equation:

\begin{align} u_{\text{h}}(x) \sim x^\beta \exp(\lambda x) \end{align}

Substituting into the homogeneous continuity equation and subsequently collecting terms according to powers of \(x\) yields:

\begin{align} \left( \lambda^2-(\bar{\sigma}^2+\bar{k}^2) +\frac{(2\beta+5)\lambda}{x} +\frac{\beta^2+4\beta-m^2}{x^2} \right)u_{\text{h}}=0 \end{align}

Since the equation under consideration describes the asymptotic behavior of the solution for \(x\to\infty\), the prefactor in parentheses must asymptotically tend to zero. It follows that:

\begin{align} \lambda^2-(\bar{\sigma}^2+\bar{k}^2) +\frac{(2\beta+5)\lambda}{x} +\frac{\beta^2+4\beta-m^2}{x^2} \to 0 \qquad \text{for } x\to\infty. \end{align}

As \(x\to\infty\), the constant term dominates over the terms proportional to \(x^{-1}\) and \(x^{-2}\). Therefore, the following must first hold:

\begin{align} \phantom{\Leftrightarrow}\;& \lambda^2 = \bar{\sigma}^2+\bar{k}^2 =: \Lambda^2 \\[6pt] \Leftrightarrow\;& \lambda = \pm \Lambda \end{align}

If \(\lambda\neq 0\), that is, if \(\bar{\sigma}^2\neq -\bar{k}^2\), then after the constant term has vanished, the term proportional to \(x^{-1}\) is the next-to-leading contribution. In order for this term to vanish as well, the following condition must hold:

\begin{align} (2\beta+5)\lambda=0 \end{align}

Since \(\lambda\neq 0\), this immediately implies:

\begin{align} \beta=-\frac{5}{2} \end{align}

Thus, for \(\bar{\sigma}^2\neq -\bar{k}^2\), the asymptotic behavior of the homogeneous solution is given by:

\begin{align} u_{\text{h}}(x)\sim x^{-5/2}\left(a_{\text{h},+}\exp(\Lambda x)+a_{\text{h},-}\exp(-\Lambda x)\right) \end{align}

Here as well, the perturbation must vanish at infinity, so that the exponentially growing branch is not admissible. Hence, \(a_{\text{h},+}=0\) must hold. The asymptotic behavior of the homogeneous solution of the continuity equation for \(\bar{\sigma}^2\neq -\bar{k}^2\) is therefore given by:

\begin{align} u_{\text{h}}(x)\sim x^{-5/2}\exp(-\Lambda x) \end{align}

In the limiting case \(\bar{\sigma}^2=-\bar{k}^2\), the term with constant prefactor vanishes. In this case, the homogeneous continuity equation is given by:

\begin{align} \partial_x^2 u_{\mathrm{h}}+\frac{5}{x}\partial_x u_{\mathrm{h}}-\frac{m^2}{x^2}u_{\mathrm{h}}=0 \end{align}

Since no constant prefactors appear in this equation any longer, it is natural to use a power-law ansatz of the following form for the asymptotic solution:

\begin{align} u_{\mathrm{h}}(x)\sim x^\beta \end{align}

Substituting into the homogeneous continuity equation yields:

\begin{align} \frac{\beta^2+4\beta-m^2}{x^2}u_{\mathrm{h}}=0 \end{align}

Here as well, the prefactor must asymptotically tend to zero. This implies the following for the exponent \(\beta\):

\begin{align} \beta=-2\pm\sqrt{4+m^2} \end{align}

Thus, for \(\bar{\sigma}^2=-\bar{k}^2\), the asymptotic behavior of the homogeneous solution is given by:

\begin{align} u_{\mathrm{h}}(x)\sim x^{-2}\left(a_{\mathrm{h},+}x^{\sqrt{4+m^2}}+a_{\mathrm{h},-}x^{-\sqrt{4+m^2}}\right) \end{align}

Here as well, the perturbation must vanish at infinity, so that the algebraically growing branch, or, in the special case \(m=0\), the constant branch, is not admissible. Hence, \(a_{\mathrm{h},+}=0\) must hold. The asymptotic behavior of the homogeneous solution of the continuity equation for \(\bar{\sigma}^2=-\bar{k}^2\) is therefore given by:

\begin{align} u_{\mathrm{h}}(x) \sim x^{-2-\sqrt{4+m^2}} \end{align}

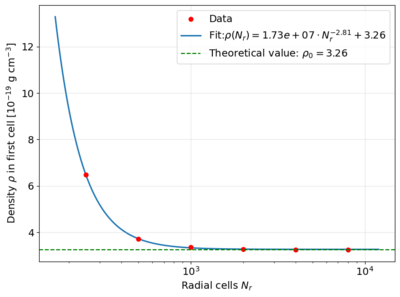

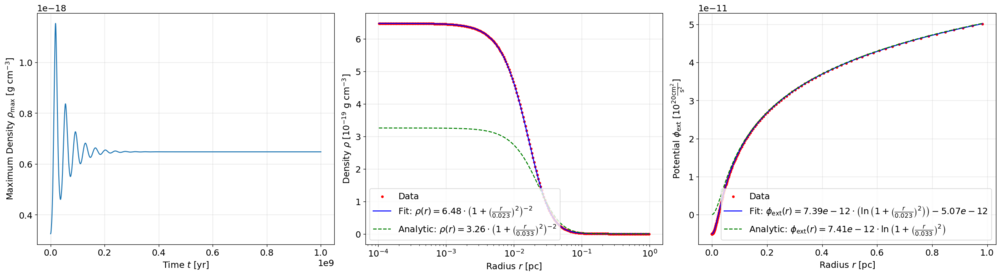

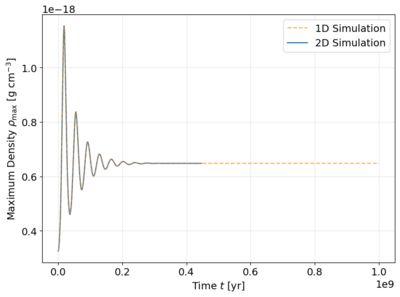

After determining the homogeneous asymptotic solution branches, it must first be clarified which of these contributions asymptotically dominates as \(x\to\infty\). This is crucial for the further construction of the particular solutions, since the coupling terms in the two differential equations are generated by the other perturbation quantity in each case. Strictly speaking, the general solution of the other equation would have to be inserted into the respective inhomogeneity. Since this is not directly possible because of the coupling, the asymptotically most slowly decaying homogeneous solution branch is used first in the following in order to determine the particular contribution generated by it. Since the actual asymptotic structure of the coupled system is additionally influenced by the induced particular contributions, it must then be checked whether these remain subdominant with respect to the homogeneous contributions or become asymptotically dominant.